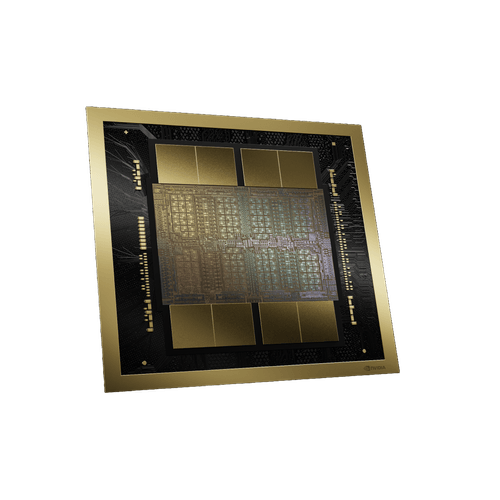

Nvidia Hgx B200

NVIDIA HGX™ B200

High-performance server platform purpose-built for the Blackwell architecture.

The NVIDIA HGX B200 combines eight Blackwell GPUs interconnected via fifth-generation NVLink to provide unprecedented compute density. It is the most powerful single-node solution for generative AI and LLM inference today.

Architecture Highlights

15x Inference Perf

Overwhelming advantage in LLM inference compared to previous generations.

1.1TB Memory

Massive HBM3e capacity to support the largest models.

Application Scenarios

Optimized for variety of advanced computational workloads

Generative AI Training

Rapid iteration of GPT-scale and similar large pre-trained models.

Real-time LLM Inference

Serving millions of users with low-latency content generation.

Core Capabilities

2.5 PFLOPS FP8 Performance

144GB HBM3e Memory

1.8TB/s NVLink Bandwidth

Superior Energy Efficiency

Technical Specifications

| GPU Quantity | 8x Blackwell B200 |

| Compute (FP8) | 36 PFLOPS |

| Total Memory | 1152 GB |