Nvidia Hgx H200

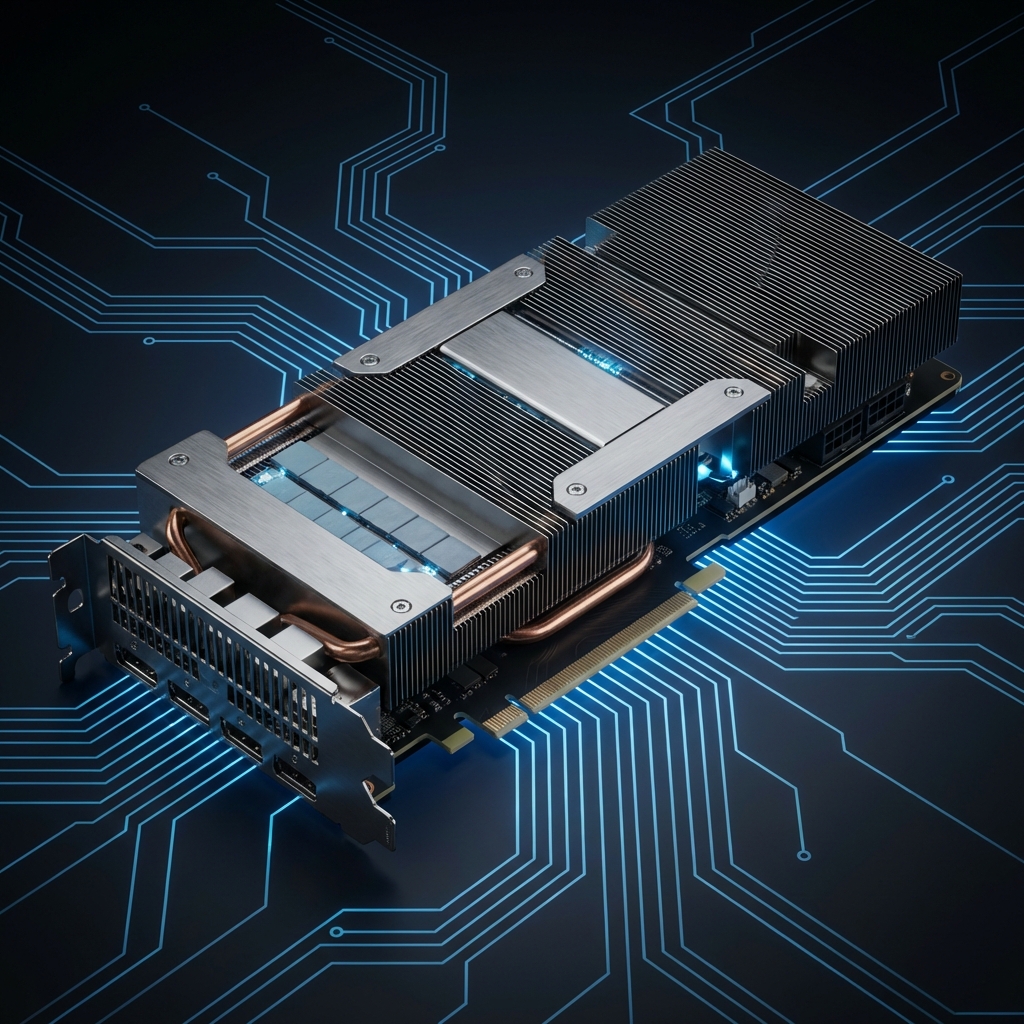

NVIDIA HGX™ H200

The performance peak of the Hopper architecture with massive memory capacity.

HGX H200 is the premier platform for mainstream large model training today. Thanks to the introduction of HBM3e technology, its memory bandwidth and capacity have significantly increased, enabling more efficient processing of ultra-large datasets.

Architecture Highlights

4.8TB/s Bandwidth

Eliminating memory bottlenecks in large model training.

1.4x Throughput

Significant improvement over H100 in specific workloads.

Application Scenarios

Optimized for variety of advanced computational workloads

Massive HPC

Applied in weather forecasting, drug discovery, and precision fields.

Deep Learning R&D

Providing a stable underlying environment for research institutions.

Core Capabilities

141GB HBM3e Memory

4.8TB/s Memory Bandwidth

Exceptional Inference Performance

Industrial-Grade Reliability

Technical Specifications

| GPU Quantity | 8x Hopper H200 |

| Memory Type | HBM3e |

| AI Compute | 32 PFLOPS |